How we ran a qualitative research study remotely without losing our minds

For several years now, we’ve been helping agile teams conduct powerful retrospective meetings across the bounds of space and timezones.

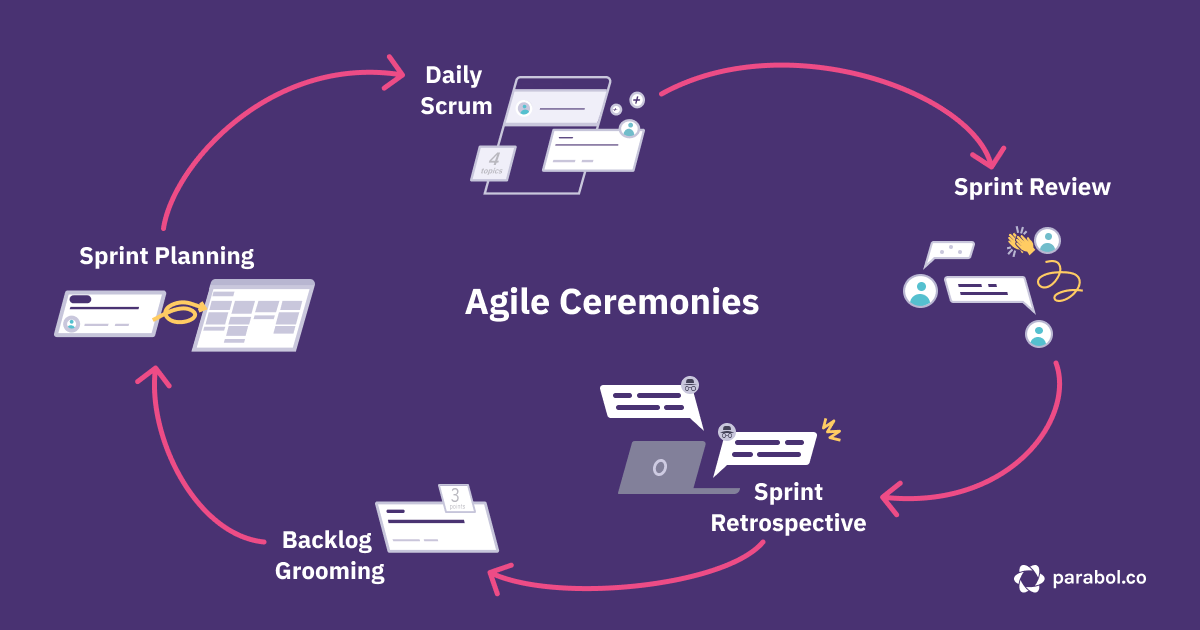

But retrospectives are just one of the five agile ceremonies teams adopt in their quest to be more customer-centric, alongside Sprint Planning, Backlog Refinement, Daily Scrum (or stand-ups) and Sprint Reviews.

We realized that if we want to help more teams make their meetings worth it, we needed to branch out and support more types of meetings. But where to start?

We found ourselves, as many product teams do, with an idea of the area we wanted to tackle, but not enough information about what customers were truly struggling with.

As a fully-remote team, we also faced another challenge: how do we learn about our customers and synthesize those learnings into insights, without ever stepping foot in a conference room together?

We used a variety of tools – from emails and surveys to Parabol itself – to engage with a diverse array of customers and define some insights for the next phase of our product.

Along the way, we learned some lessons that might help you too.

Let’s discuss what makes running remote research studies so difficult, how we tackled those challenges, and what you can do to make your UX research top-notch, even at a distance.

What makes remote UX research hard: scheduling the right participants, staffing interviews and finding patterns in the data

Understanding users’ problems well enough to craft solutions they hadn’t even dreamed of is hard.

But when your team isn’t in the same office, it is even more challenging.

How do we find qualified participants for our UX research?

First and foremost, the team needs to identify the right types of folks to participate in the study, and then get them scheduled.

Participants should have a mix of similarities and differences:

- Similarities: All participants are current or prospective users of the product. In our case, that meant engineering and product leaders. They needed to have this in common so that their insights were representative of the folks we’re building for.

- Differences: The overall pool of research participants should differ on dimensions that the whole pool of prospective users also differs on, such as gender, geography and company size. By having a diverse pool of participants, we could ensure that the insights we found were broadly true across our prospective base, rather than only true for a specific sub-segment of our potential users.

Because there’s often not much incentive to participate in research studies, getting the right folks to talk to you tends to be the hardest part.

How do we cover all the research interviews without overloading ourselves?

Once participants schedule time with you, the team has to lead those interviews, take notes, share recordings and more.

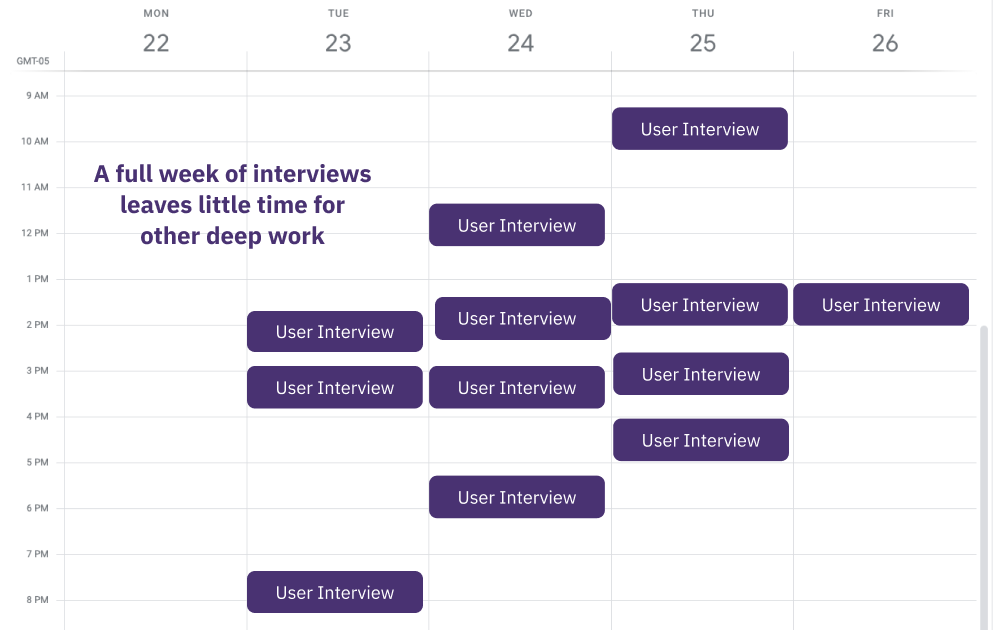

Because participants generally don’t have strong incentives to give you their time, the research team often needs to be extremely flexible, to make participation easy. If you’re open to participants from different time zones (geographic diversity FTW), that can lead to interviews being peppered throughout a day, often at odd times.

With a fragmented schedule, those running interviews often have only little bits of time between calls to do other work, which isn’t enough for deep work. When this burden falls to designers, that typically means design work stops for the length of the research, blocking or slowing down everyone else too.

One way to address this sprinkling of interviews is to share the load of leading or taking notes. If you can choose which interviews you’ll participate in, that lets you keep control of your schedule and find a balance between conducting research and continuing on with delivering work in other areas.

However, when the research team is also spread across locations and time zones, handing off the baton can be tricky. In order to have comparable insights from different interviewees, we wanted to maintain consistency between interviews in the kinds of questions we asked, what we dug deeper into and how we kept notes. We also wanted to make sure that interviewees had a positive experience speaking with us, which adds pressure to the folks leading the interview.

Too often, teams resolve this problem by putting this work onto one team member – “the researcher.” Yet that comes with its own set of challenges:

- Leading a research study becomes a heavier lift, which means fewer folks want to do it, which means less research overall.

- Fewer people directly hear feedback from customers – when you haven’t heard the feedback directly yourself, it’s less powerful, and more likely to be ignored or misunderstood.

We wanted to find a way to cover interviews so that the load was spread across the team, interviewees had a positive experience, and we felt the effort was manageable.

How do we synthesize hours of UX interviews into insights?

Once interviews are over, the team faces another obstacle: how to distill tens of hours of spoken feedback into distinct patterns.

With co-located teams, when the interviews are over, folks will gather into a conference room, write out a whole lot of sticky notes with what they heard, and then start the difficult task of finding patterns among the cards.

The physicality of moving and grouping makes the research more tangible.

In a remote context, that physicality is lost, and without it, the research findings can feel faraway.

What’s more, with various tools that create digital versions of physical places, the solution can be worse than the problem. For example, with a collaborative text document, you often wind up with a long-form collection of bullet points and scraps of quotes. That’s hard to make sense of, makes it difficult to connect ideas that are far from each other in the document, and overall tends to lose the trees for the forest.

We wanted a solution that let us have the best of a physical whiteboard, but with extra superpower that comes from using digital tools.

With all of these challenges in mind, we set out to see how we could conduct a UX research study remotely without falling prey to these issues.

Find UX research participants by leveraging your audience, supplement with additional tools

When it came to finding research participants, we found that being fully remote proved to be an advantage.

Because we couldn’t ask folks to come to our office (we don’t have one!), we could make participation as easy as logging onto a video call, something accessible to any of our current or prospective users, regardless of their location.

First, we needed to define the similarities and differences that mattered to us with this particular study. We of course wanted to address our potential users, but because we were specifically asking about backlog grooming, we also wanted to speak with folks who already had experience doing this in a remote context and could speak to the current pain points. Outside of that, we wanted to cast the net wide, looking for a broad representation of geographies and company sizes.

Then, we thought through how to incentivize participation. We tried three techniques to maximize our chances:

- Speaking with folks who are already connected to us: We started with our own users, who have an interest in an improved product. Warm messages like this yield far better response rates than totally cold messages.

- Creating a financial incentive for cold interviews: We used a Respondent.io to recruit non-users to speak with, and offered a reward for completed interviews.

- Offering different ways to engage, starting with easier: We experimented with sending a survey to customers, then asking for their time and being flexible with scheduling. By letting people engage as much as they wanted, we could get a greater overall volume of information.

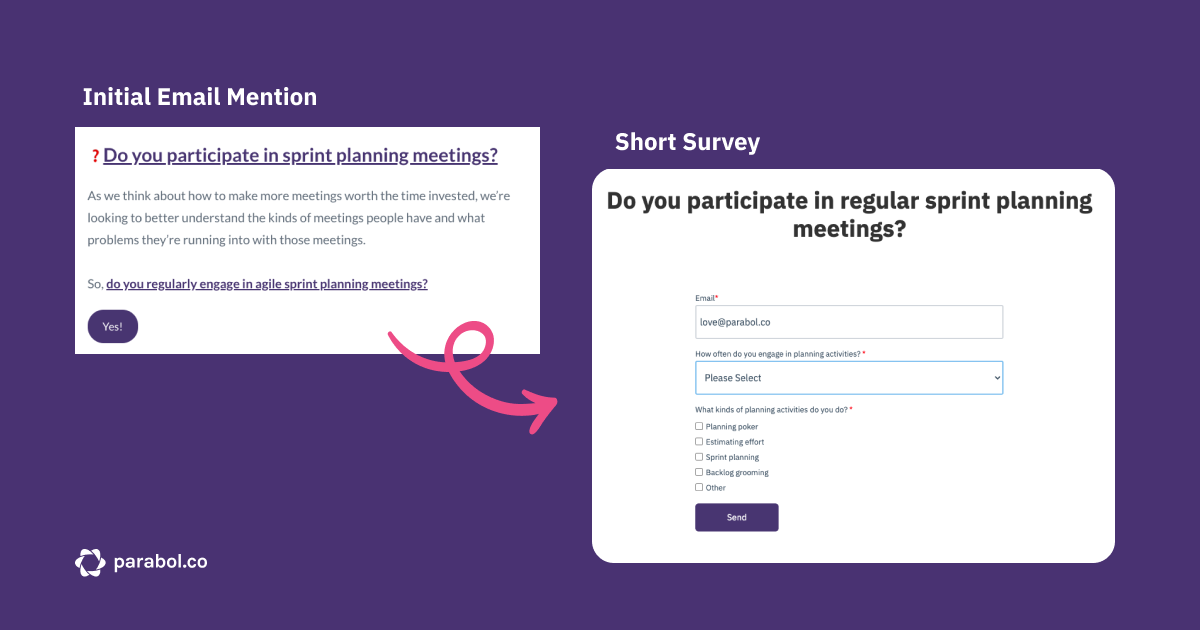

Once we had an idea of what we wanted to do, it was time to execute on our plan. We started with our own users. In a new feature announcement email, we included a 3-question survey about their planning practices.

With this survey, we could pre-qualify participants, and only ask the set of folks who fit our criteria to participate. As a bonus, we also got some general data about backlog grooming practices from the survey itself!

Of course, we could’ve sent a dedicated email, but we didn’t want to bug folks who weren’t interested in participating.

Once we had our survey respondents, we sent a follow-up email asking those that met our criteria to book a time with us. We used Hubspot’s meeting tool to allow interviewees to book a time without a lot of back-and-forth.

This gave us a pool of existing users, but we know the world of agile extends far beyond our shores.

We wanted to speak with folks using existing backlog grooming tools to understand where their needs were too.

To do that, we turned to Respondent.io – an online tool for recruiting study participants. We chose Respondent because I had previous experience with it, and the tool did not disappoint. It allowed us to:

- Quickly (within 2 days) get replies from 36 potential participants who met our criteria

- Choose between potential interviewees based on how they responded to our questions

- Schedule calls with interviewees directly

- Pay out incentives (a $100 gift card) to everyone who attended an interview just by entering our credit card details

To set up our project, Respondent.io asked us to fill out what geography, industry, job title and other criteria we wanted to cover. On top of that, we set up additional qualifying questions that were hyper-specific to our research, such as “ What agile planning activities do you regularly participate in?”

Because we had to fill out these options, we had useful conversations internally about who we were really interested in speaking with, and got to a much more specific definition of our target audience.

Share the load with your team with an accessible sign-up schedule and interview templates

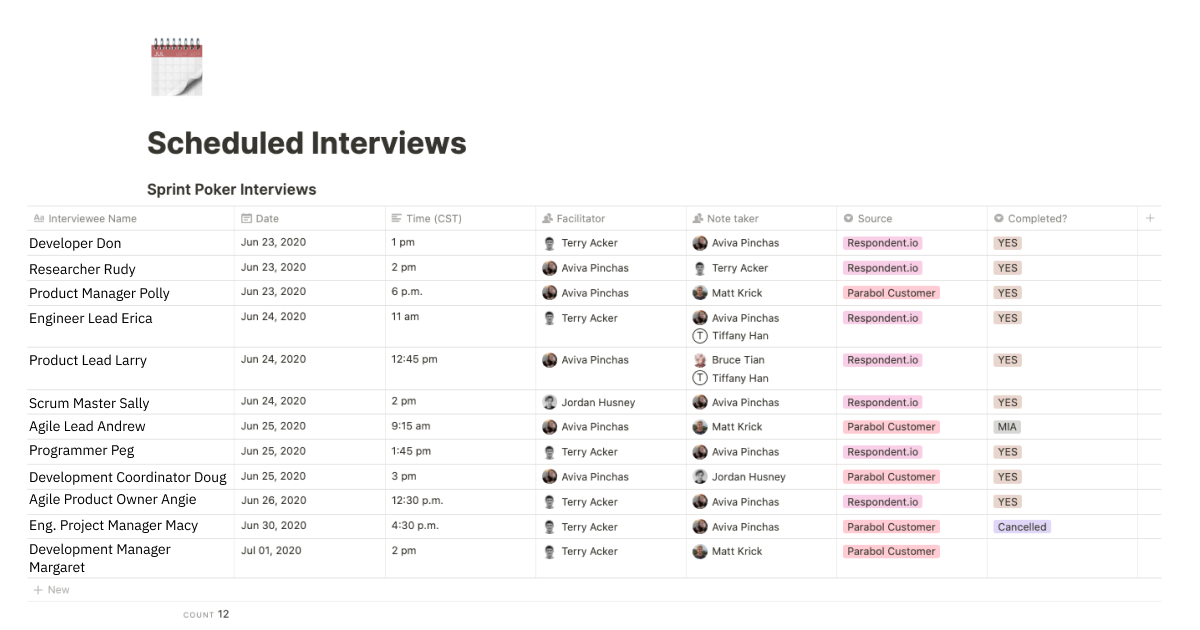

Before we asked folks to schedule, we defined which hours we wanted to be available for interviews. Once interviewees started taking up slots, it was time to sign up for facilitating and note-taking for all the sessions.

To do that, we created a schedule in our Notion wiki and then all signed up at our leisure.

When I noticed folks weren’t signing up to facilitate, I took on more facilitation slots and freed up note-taking slots. You may find a similar trend on your team, as not everyone is comfortable asking a stranger questions. By being flexible with the roles, we could include more team members in the research process, so they heard first-hand what users had to say.

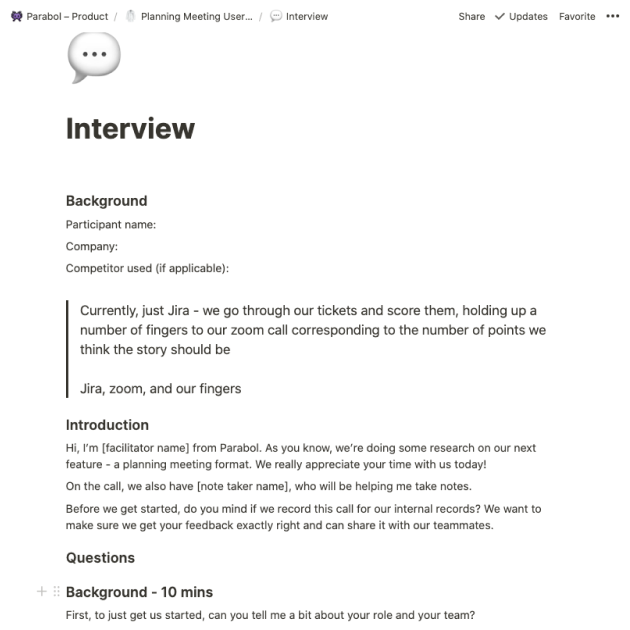

To share the load on asking questions, we went through a similar process. Terry, our lead designer, and I collaborated in Google Docs on a set of questions, and then moved the template into Notion.

For each call, we copied the Notion template and added in the relevant details about that particular user. As facilitators, we used that user-specific Notion doc to guide our questions, and notetakers used the same doc to add in their notes.

Having the notes in an accessible place provided a couple of other benefits:

- Folks who didn’t participate in a specific interview could easily review the notes after the fact and get a sense of the discussion

- We used Notion’s @mention options to raise interesting points to each other throughout the process, even if we hadn’t directly participated in the interview

By creating accessible places for both the schedule and the templates, and having those live in the same place, we could share the load on both scheduling and facilitating, without anyone being forced to focus exclusively on user interviews for their whole week.

Synthesize data using remote-friendly tools

AKA: How our online retrospective app helped us synthesize our research

They say that when you have a hammer, everything looks like a nail, and that sure seems to be true for our team.

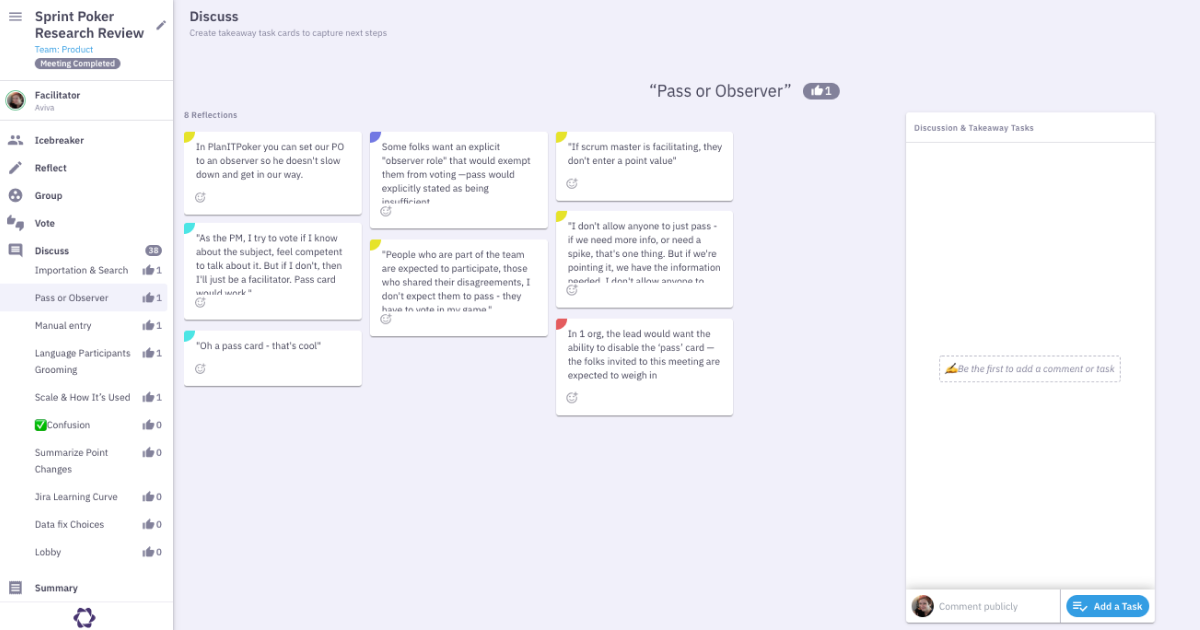

While we’d played with digital whiteboard tools in the past, we decided to use Parabol to synthesize our findings around backlog grooming and agile planning for a few reasons.

- Tactile experience: Adding Reflections in Parabol is similar to writing on sticky notes. When the team groups reflections, it’s similar to moving a sticky. We’ve found that this bridges the gap between the experience we’re used to having and the new virtual context we’re in, and we wanted to bring this into the process of synthesizing our research, too.

- Accessibility: We already use Parabol and our integrations to manage our work. We already have a Parabol team we use every week. That meant this was a more accessible place for synthesizing findings than trying a new tool just for this one task. We also know that adding takeaway tasks is built into Parabol, and we have practices for both tracking tasks on our Parabol board and syncing tasks to GitHub. All of this meant that our findings and takeaways would live where our work already lives.

- Individual input alongside team collaboration: The cycles of individual input and then collaborating as a team in a retrospective are pretty similar to what we wanted to do with research. In a retrospective, the team individually write their own reflections, and then collaborate to group them together. The team then votes individually and comes back together to talk through the topics that received the most votes. With this research study, we wanted to reflect individually on what we had heard, group those into findings together, individually vote on what we cared about, and then discuss together. The processes were so similar, it seemed silly to find another tool for the task when we’d already built one.

- Tasting our own champagne: We wanted to see if we could. Ultimately, we wanted to see if Parabol could be used for this off-script use case, and learn from the spots where we ran into challenges. This won’t be relevant for other teams, but for us, tasting our own champagne was a key reason why we used our retrospective app to synthesize UX research.

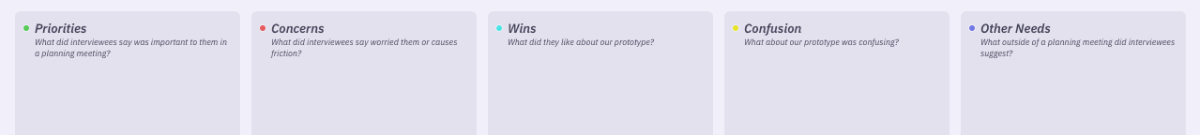

So that’s the ‘why’. For the ‘how’, first, we created a custom retrospective template specifically for our research study. In hindsight, we ran into some challenges with reflections that didn’t fit into one of these categories, and we also found that we wanted to know which user a reflection came from. In future, we might try having a column per user.

In hindsight, we ran into some challenges with reflections that didn’t fit into one of these categories, and we also found that we wanted to know which user a reflection came from. In future, we might try having a column per user.

Then, we opened the retrospective meeting when we started conducting interviews. That meant folks could add in their reflections directly after an interview, rather than needing to wait till later and hope they remembered.

Throughout the week, we added Reflections asynchronously and then scheduled a synchronous meeting to process those reflections together.

We found that the multi-player grouping in Parabol was really useful for processing a large amount of reflections together in a relatively limited time – we grouped 180 reflections into 38 topics in less than an hour.

We also found color-coding to be helpful in getting the pulse of a particular topic. For each topic, we could see which prompt they came from because of the color on the upper-left corner of the card, and get a sense if something was working well or was causing challenges.

After our sync meeting, we realized we didn’t need to do anything else to create an artifact of our findings – our meeting summary included the top topics, reflections that were part of those topics, and even the option to review comments we’d left for ourselves in discussion threads throughout the meeting.

An online retro app may not be the right tool for every team to synthesize their UX research, but for us, we found we could process a lot of information quickly and collaboratively, which is exactly what you want from any research pattern-finding meeting.

Do it yourself: leverage your existing audience and tools to run an effective UX research study from a distance

By using our existing tools – our email list, Hubspot, Notion, Parabol – and supplementing with specific tools like Respondent.io, we managed to run a UX research study remotely without losing our minds.

The results speak for themselves.

With just a few weeks of effort, we greatly leveled up our understanding of how agile teams conduct backlog grooming ceremonies today, the challenges they run into, and the opportunities we have to help them.

In the process, we engaged our whole product team without requiring anyone to make research their full-time job.

If you’re looking for a step-by-step process, this is it:

- Define who you need to speak to, and ask yourself how are they similar? How are they different?

- Reach out to your existing list, qualify the folks who want to speak with you, and book meetings with them

- Use tools like Respondent.io to supplement your existing audience, and book them for meetings around the same time

- Create an accessible schedule for the team to sign-up for slots, and monitor it to make sure the opportunity is spread across the team

- Collaborate on an interview script, then place the final template in an accessible spot for both facilitators and note takers

- Use a remote-first tool to synthesize research findings – open it early to add thoughts as you go, work together to group findings, discuss topics one-by-one and have a digital artifact of your conversation to refer back to.