#479 – Agent Epoch

Friday Ship #479 | February 21st, 2026

This week we began a concentrated exploration of AI Agents.

My first computer was an Apple ][+. Even though I do remember the rotary dial on a telephone (and cursed anybody with a zero digit in their phone number), I don’t remember a time before computers. I was born in 1980 and I always remember the Apple, and its monochrome green screen, as a fixture of my father’s home office.

I’ve lived through many computing revolutions since the Apple: the introduction of the PC, BBSes, Microsoft Windows, the Internet, Linux, the smartphone, “the Cloud,” streaming and SaaS, and most recently LLMs and GPT.

In my 40-some years I haven’t seen anything as obviously groundbreaking as agentic computing.

The Small, Big Leap

Looking back at my notes, I began experimenting with GPT-2 in 2020. Tinkering with GPT-3 later that same year was the first time I started to dream of new, never-before-possible applications: auto completion, grouping, filtering, and semantic search. GPT-4 (2023) and GPT-4o (2024) were the first time I felt, “ok, these models are starting to become good sparring partners to my own creativity.”

It wasn’t until 2025 that the latest Gemini 3 model series made me feel that the job of software engineer (and related disciplines) was forever changed to craft prompts and review code, rather than author directly.

Here and there I had experimented with implementing model-context protocol (”MCP”) servers and allowing model harnesses to use them. Some of my first experiments were extracting information from tasking and communications systems to help me give performance review feedback. I remember thinking, “wow, this task would have taken me days, and now it takes me longer to read the final product than assemble it.”

I curiously read on about the development of OpenClaw (née Claude Bot) and how this agentic application was cajoled into developing its own “social network” where bots exchanged posts. I didn’t get it. It didn’t feel revolutionary. “Ok,” I thought, “so it’s just model running in a loop, no biggie, all the big players’ apps now keep memory of past prompt interactions.” Boy was I wrong.

When January 2026 rolled around I began giving my colleagues instructions on how to add the BigQuery MCP to their Claude or GPT clients so they could ask questions of our company’s data using natural language. To me, this was the “killer” app: rather than need to understand how our data relates to each other and tediously hand-roll SQL (or describe pseudo-code to GPT and have it take its best shot), people can just ask the business question they want an answer to and BAM the result comes back. Magic. I wanted to make this magic self-serve, with no setup instructions. That’s when I set up nanoclaw.

Enter Nanoclaw

Nanoclaw is an application that, borrowing the tagline from its predecessor OpenClaw, “is an AI that actually does things.” Nanoclaw is also described as a “personal assistant” but I believe this term has about as much in common with a typewriter as it does with a modern computer: they both have keyboards but that is where the similarities stop.

Nanoclaw, at its heart, is just a program that reads a prompt from a communications channel (like WhatsApp or Slack), runs the prompt against a model (via an API) but has permission to use tools – that is to say use credentials to call APIs of local and internet services, while remembering the things it did in the past by keeping a database. This makes it very effective at taking a prompt like, “research venture investment firms that invest in category X, determine my closest connection to any of the partners at these firms, and create an outreach database in Notion I can use to manage my outreach process…”

However, the truly mind-blowing thing about Nanoclaw is the way it is set up: you ask it to modify its own code. Then, as you desire to personalize it further and add new capabilities (say, to be able to connect to your Google Calendar, or some custom API), you just ask it to modify itself…and it does.

Software that evolves itself is a whole new beast, and one that we have no idea where it will lead. I am excited. I am frightened. The future is going to be even wilder and weirder. And writing from Minneapolis in 2026, the present is already wild and weird enough, thank you.

What we’ve been up to…

We’re a few days into hacking on our own Nanoclaw bots. I’ve been writing a bot to help me manage outreach, surface insights, and manage my calendar. Another of us has been automating tedious DevOps tasks. Still another is using a bot to identify large numbers of users who are creatively dodging paying and appear under our free-tier limit when they are not.

A prayer for the future

All of us are taking work off our plates and handing it to these bots. This is likely the greatest single leap in productivity, in knowledge work, ever. It is at least one order of magnitude greater than what LLMs brought to us. The question is, who will capture the value of all this productivity? Will we, the workers, have more free time and a fairer wage or will this level of productivity become the new norm? My hope is that one day, if the bots can take it off our plate, we can all just relax and take a well-deserved chill.

Metrics

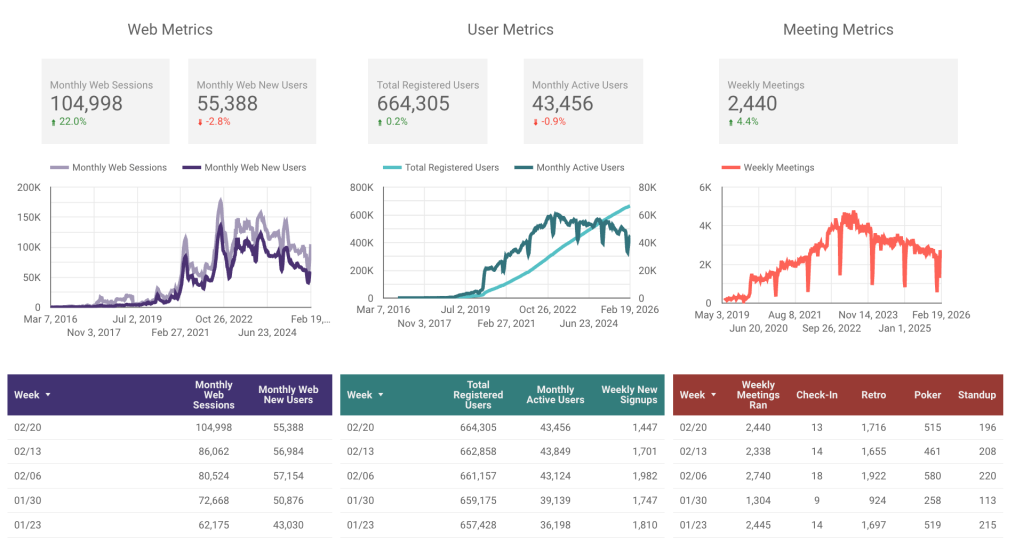

A very odd pop in web traffic this week; up over 20%! Also spotting a 4% rise in the number of meetings ran. All other metrics were nearly flat.

This week we…

…started the first week of cool down, following Shape Up Cycle 12.

…began experimenting with a new “Unlimited Tier” for very large organizations. Our hope is, by removing the worry about scaling to the next tier, we can encourage expansion.

Next week we’ll

…plan Shape Up Cycle 13.