#486 Cognitive Surrender

Friday Ship #486 | April 11th, 2026

This week we spent time planning and architecting our next AI features.

In particular, we’ve been focused on how to improve Parabol’s ability to be used by AI Agents—not to replace human beings—but to enhance how human beings work together. We envision retrospectives populated by observations on interactions in backlog management systems, raising recurring issues that hit observability platforms, or customer success interactions.

Keeping Humans Centered

Last week I traveled from Minneapolis to L.A. with my daughter to spend the Passover holiday with my family. My brother and my sister-in-law traveled in from New York. We talked some about the foreseeable societal impacts of AI. She recently graduated from Colombia with her master’s in psychology and has been keeping up to date with the latest primary literature. Over the past few days she sent along fascinating papers on what we know of the measurable effects of AI on the human psyche. One term, “Cognitive Surrender” (2026, Shaw & Nave), caught my attention.

The general idea is that our systems of reasoning need to be exercised in order to develop properly. Even if an individual has developed a high-functioning reasoning system, that system can atrophy if not used. The usage of AI may impede the development and maintenance of healthy reasoning:

The AI system creates an illusion of competence and contributes to a user overestimating their awareness of what was generated and/or reviewed by the AI system. As a result, introspection accuracy and user control decrease (Hoch et al., 2023). Both decreased introspection accuracy and user control impede adolescents and young adults whose executive functioning has not yet been developed (Iley and Medimorec, 2024; Sun, 2024).

And from Jose et al. 2025:

The reward systems used by AI tools (i.e., rapid feedback, little resistance, and expected outcomes) create an inclination toward ease vs. depth. When ease is attained with less effort (e.g., difficulty in formulating a question), one may discontinue engaging in high-level thinking. These patterns of attentional adaptation under AI influence set the stage for understanding how developing minds, particularly adolescents, navigate delegated cognition…If AI-based design consistently thwart this “cognitive balance,” then the likelihood increases that AI-based design could create an environment that encourages efficiency at the expense of personal growth and development…Unlike cognitive offloading, which is typically strategic and task-specific (e.g., using GPS to navigate), cognitive surrender entails a deeper transfer of agency. Whereas cognitive offloading is a strategic delegation of deliberation, using a tool to aid one’s own reasoning, cognitive surrender is an uncritical abdication of reasoning itself. It reflects not merely the use of external assistance, but a relinquishing of cognitive control: the user accepts the AI’s response without critical evaluation, substituting it for their own reasoning

For us, a paramount design principles are AI may remove tedium but it most not reduce agency. Humans must stay at the center.

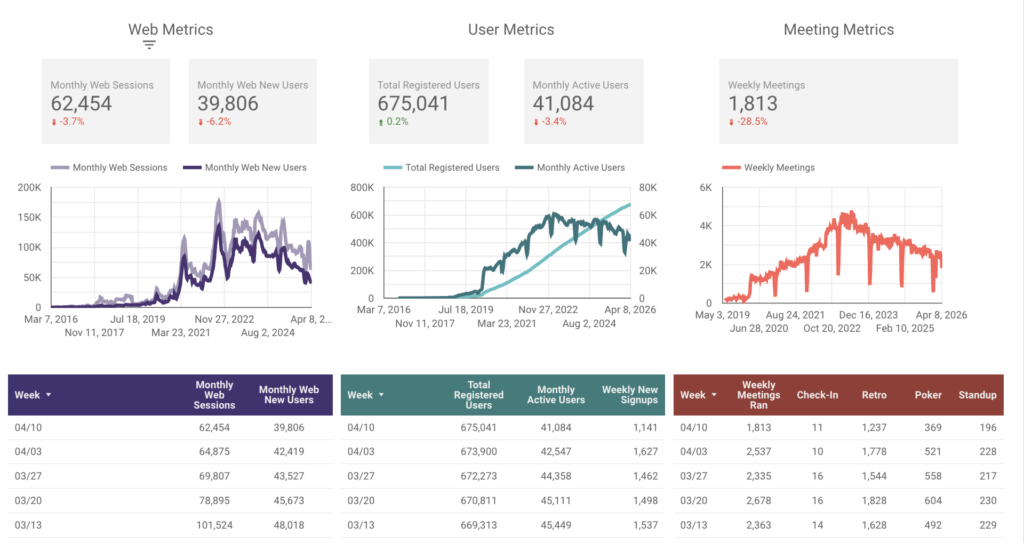

Metrics

A big dip in retrospective meetings run this week. Curious! All other metrics seem within expectation.

This week we…

…planned ShapeUp Cycle Cycle 13. After six weeks, we should have some bold new features in place supporting agentic AI.

…prepped to launch a new instance at the U.S. Air Force Research Lab.

Next week we’ll

…start week 1 of 6 of ShapeUp Cycle 13.